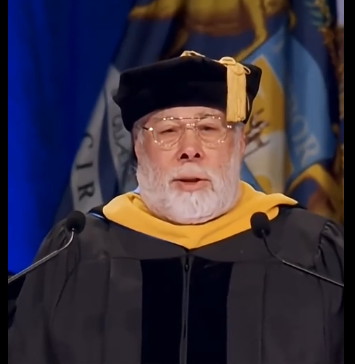

Apple co-founder Steve Wozniak famously told graduates at Grand Valley State University that they already possess “AI,” which stands for “Actual Intelligence”. Instead of praising artificial intelligence, he emphasized that human creativity, critical thinking, and emotional understanding are far more valuable than any dry or perfect machine.

While other commencement speakers—such as former Google CEO Eric Schmidt—were booed by students for praising artificial intelligence, Wozniak was met with roaring applause. He has previously noted that he finds current AI disappointing because it lacks genuine human understanding, preferring organic intelligence over the hype surrounding generative tech.